|

|||||||||

| Security | |||||||||

| Hardware Architecture for Fully Homomorphic Encryption | |||||||||

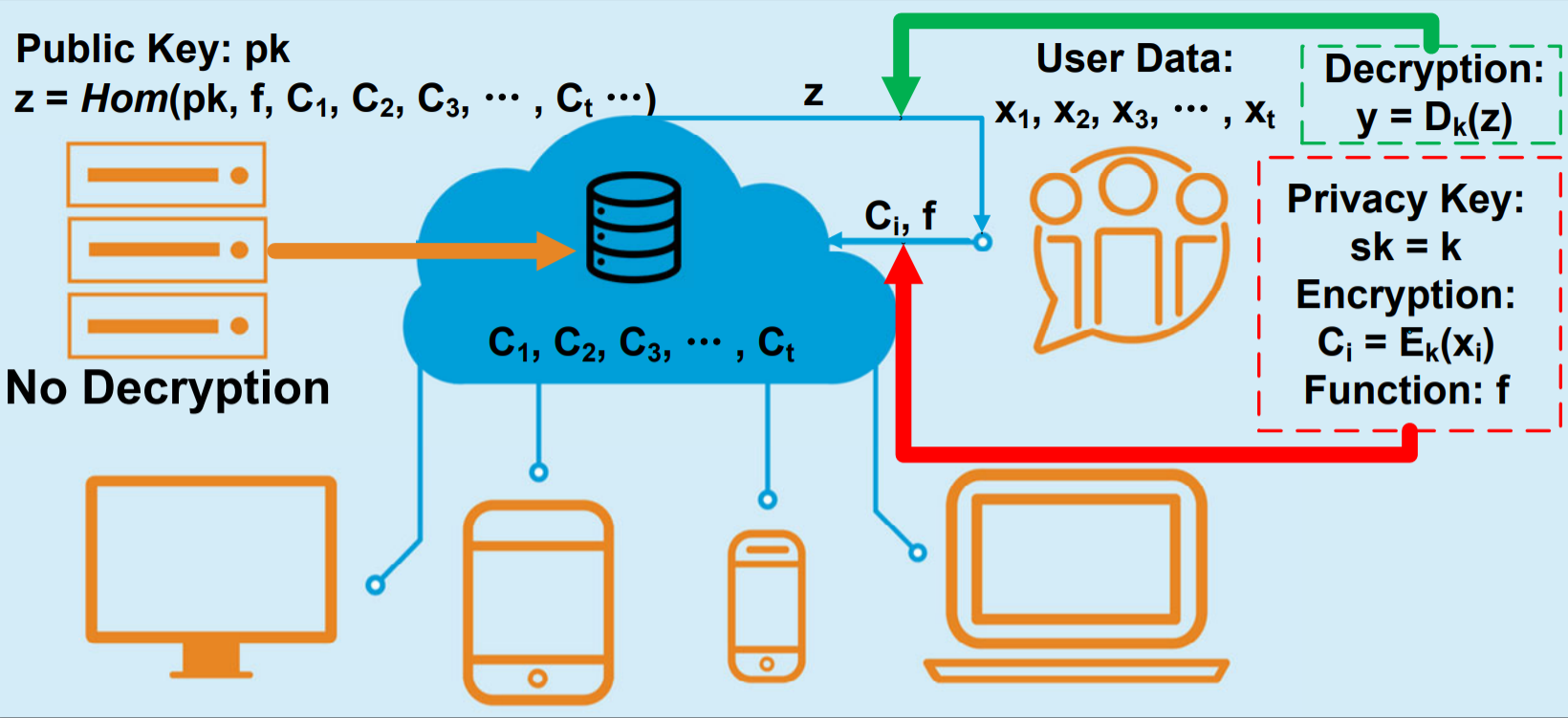

Fully homomorphic encryption (FHE) is an emerging technology that enables an untrusted party (e.g. a cloud server) to perform analytics directly on ciphertexts, while no information of the original plaintext is leaked. However, the current FHE schemes are still computationally-intensive to be applied transparently in real-world applications. Therefore, we are trying to accelerate FHE by co-optimizing its architecture synergically from both algorithm andhardware aspects. |

|

||||||||

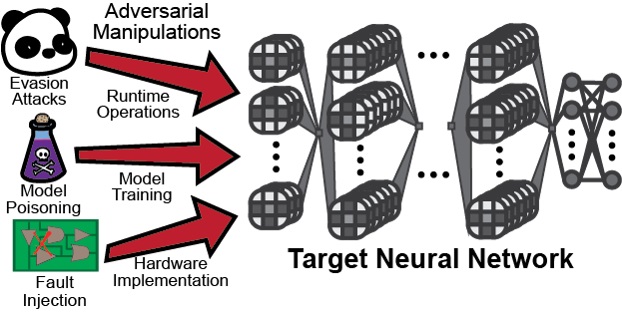

| Hardware Backdoor Attacks on Machine Learning Systems | |||||||||

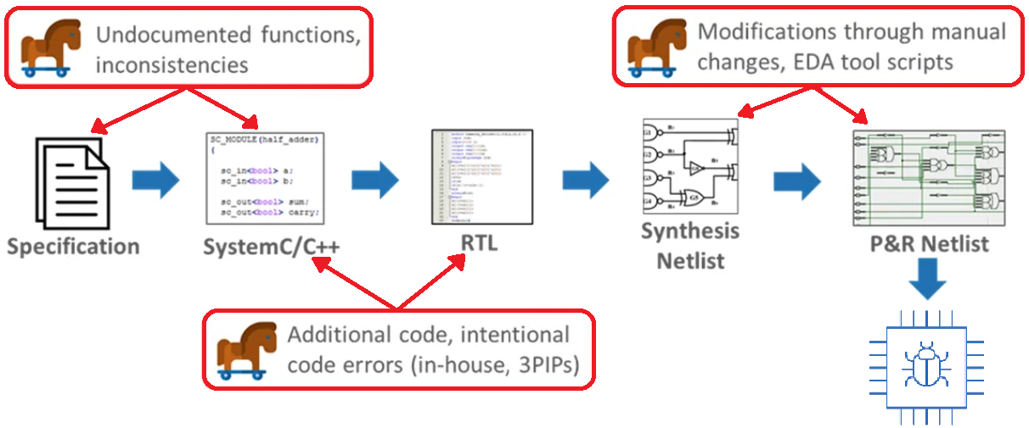

Distributed supply chains make designing application specific hardware economically feasible. But, adversarial entities can compromise them to maliciously alter the intended design. Our work in machine learning hardware backdoor explores the capabilities of the attacker in this setting. This understanding is then used to determine effective defensive measures.We have extended the threat model into the production phase for the first time, as well as demonstrated the multi-facets that physical constraints bring into adversarial deep learning and the need to extend the scope of adversarial machine learning to incorporate novel attack and defense strategies. |

|

||||||||

| Hardware Security | |||||||||

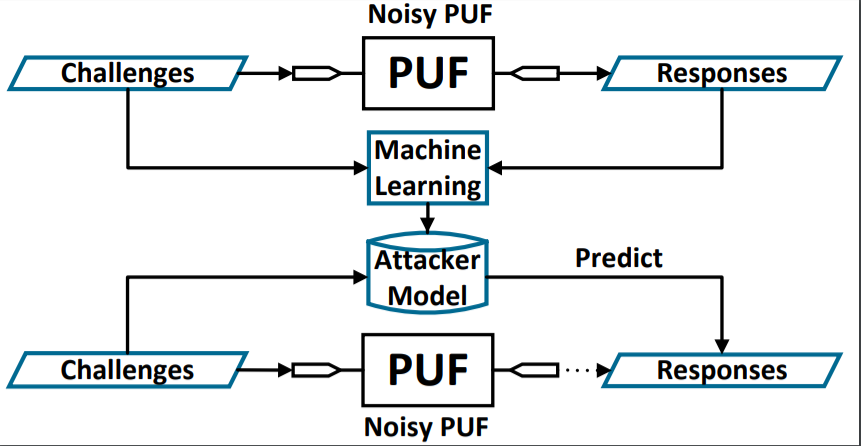

With the rapid development and globalization of the semiconductor industry, hardware security has emerged as a critical concern. New attacking and tempering methods are continuously challenging the current hardware protection methods. Therefore, combating these powerful attacks are of great importance in securing hardware devices. We are developing novel techniques to improve the performance of physical unclonable functions (PUFs) and hardware obfuscation. We are also interested in applying hardware security techniques to Internet of things (IoT) systems, such as smart grids. |

|

||||||||

| Hardware Architecture | |||||||||

| Accelerating Reinforcement Learning on the Edge | |||||||||

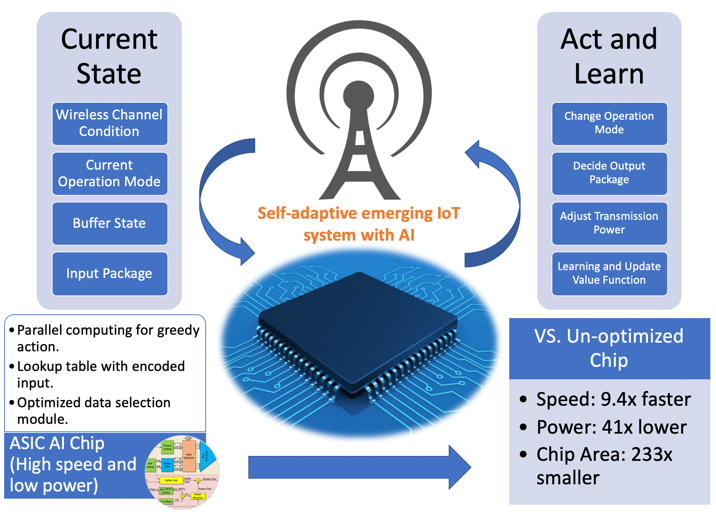

Internet of Things (IoT) sensors often operate in unknown dynamic environments featuring time sensitive data sources, dynamic processing loads, and communication channels of unknown statistics. This is the natural application domain of reinforcement learning (RL), where optimal decision policies need to be computed/learned online. We exploit the concept of post-decision states (PDSs) to leverage system knowledge, which can help increase the learning rate. Although PDSs can improve the rate of convergence to the optimum, it comes at the cost of increased action-selection complexity. Therefore, hardware acceleration is a promising direction to enable real-time applications of PDS based learning. We seek to develop efficient architectures for overcoming the computational bottlenecks of PDS based RL. |

|

||||||||

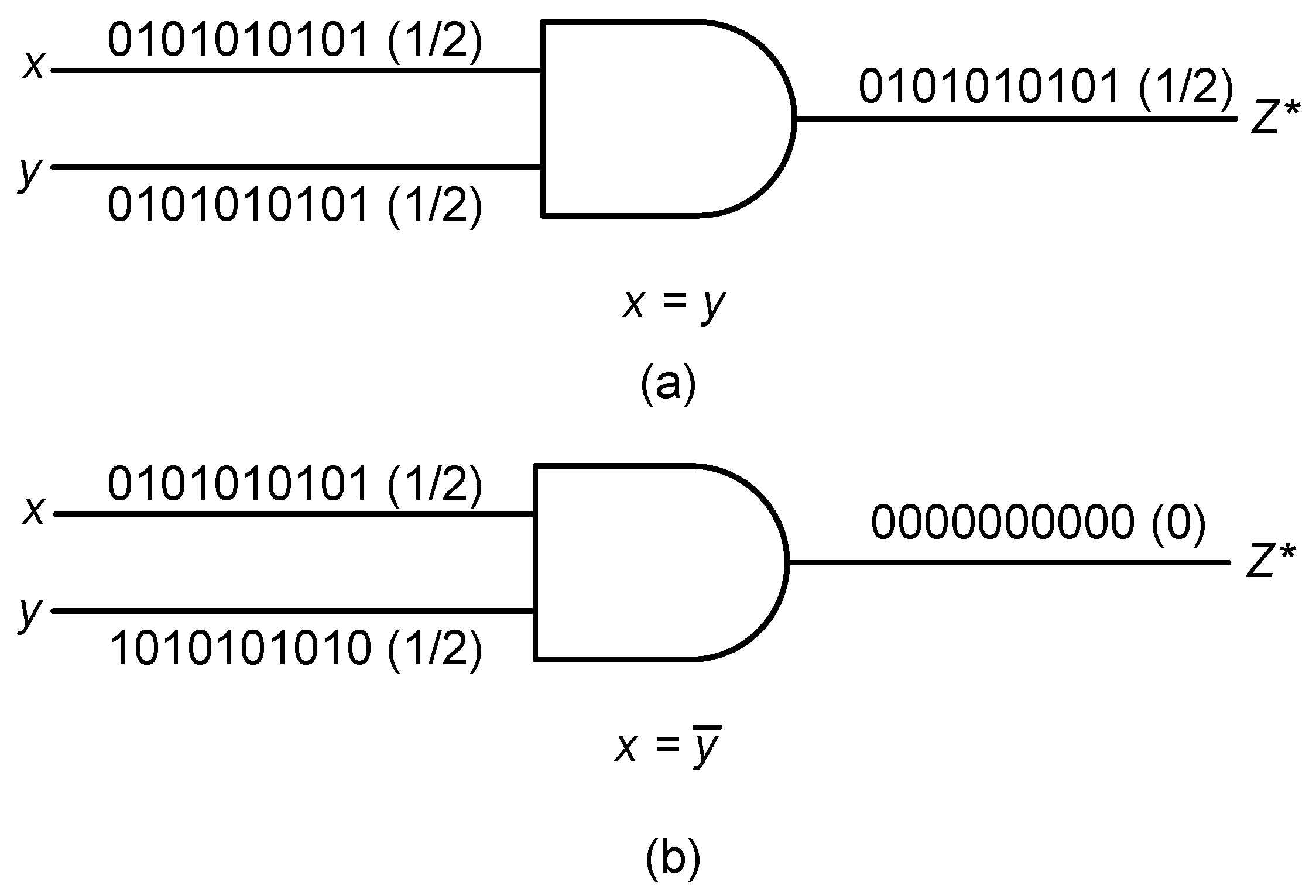

| Approximate/Stochastic Computing | |||||||||

Recently, energy/area efficient IC design is of great interest given the proliferation of computing devices, the resource-constrained nature of these systems, the desire to improve computing capability, and the need to reduce the cost. Stochastic computing has emerged as a promising alternative computing paradigm to binary deterministic computing, which represents and processes digital data by using the probability value of long pseudo-random bit-streams. The major advantages of SC are that it enables very low-cost implementations of arithmetic operations us-ing standard logic elements and is more resistant to faults and uncertainty. On the other hand, approximate computing provides a paradigm shift to reduce the hardware cost or improve the speed of logic circuits by relaxing the quality of computation. We seek to leverage these emerging techniques to develop efficient hardware architectures for various applications. We are also interested in novel approximate logic design methodologies that will integrate input data distribution to optimize the performance for specific applications by using machine learning algorithms. |

|

||||||||

| Machine Learning | |||||||||

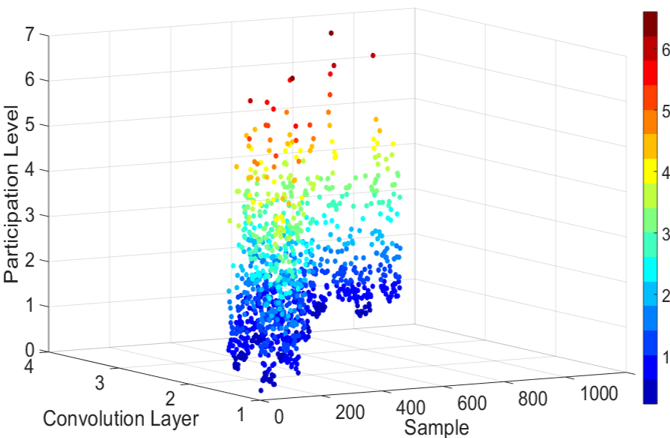

| Improving the Efficiency of Training Neural Networks | |||||||||

The learning process of a biological system is a continuous phenomenon with limited external interventions. As training progresses, the rate of learning, the number of neurons and number of synapses is modified based on the circumstances. Currently, the research of deep learning is focused on a fixed training process with a pre-defined architecture to obtain maximum accuracy. We are developing methods to accelerate training to achieve a similar pattern as biological learning, i.e., learn faster as learning processes. These methods are also applicable to online and incremental learning settings. |

|

||||||||

| Adversarial Machine Learning | |||||||||

In recent years, deep learning has demonstrated superior performance in various application fields including computer vision, natural language processing, autonomous vehicle, robotics. However, in order to facilitate real-world deployment, security and robustness against attacks have also emerged as critical concerns especially in safety-critical applications. We are investigating several perspectives of adversarial machine learning, including adversarial examples, poisoning attacks, and backdoor injection. We are developing new attacking methods and countermeasures for these topics, such as novel adversarial example generation methods for increasing visual fidelity and poisoning attacks on a per class basis. |

|

||||||||